Model Context Protocol

Today I learned how to effectively build, configure, and run a local Model Context Protocol server. The Model Context Protocol1 is an open protocol that enables seamless integration between language model AI applications and external datasources and tools.

The better half and I have had this ongoing discussion about how foolish folks can be with artificial intelligence. She regularly gets AI crafted emails from colleagues and students so we like to read them aloud for fun. We are both fascinated and skeptical about the future of AI, but mostly lean toward the skeptical side. I've mostly been harping on the pitfalls of agentic engineering patterns as it relates to my work, but now it's becoming my work.

I first realize this when I started to get requests to fix codebases or systems that had 'gotten away' from the developer mostly through the use of AI. Then I started to realize that my cheap assistant was doing more and more of the development work. And then more recently learning about long running agents to do all the work.

AI assistants embedded in the web, apps, editors, or terminals seen smart but are relatively blind to your work. The hullabaloo recently about Anthropic's CoWork cutting into other service as software models is a good example. The plugins for Claude are essentially hosted MCP servers which give it access to other software and data. The Model Context Protocol was released at the end of 2024 as an open standard and as usual, I'm a late adopter.

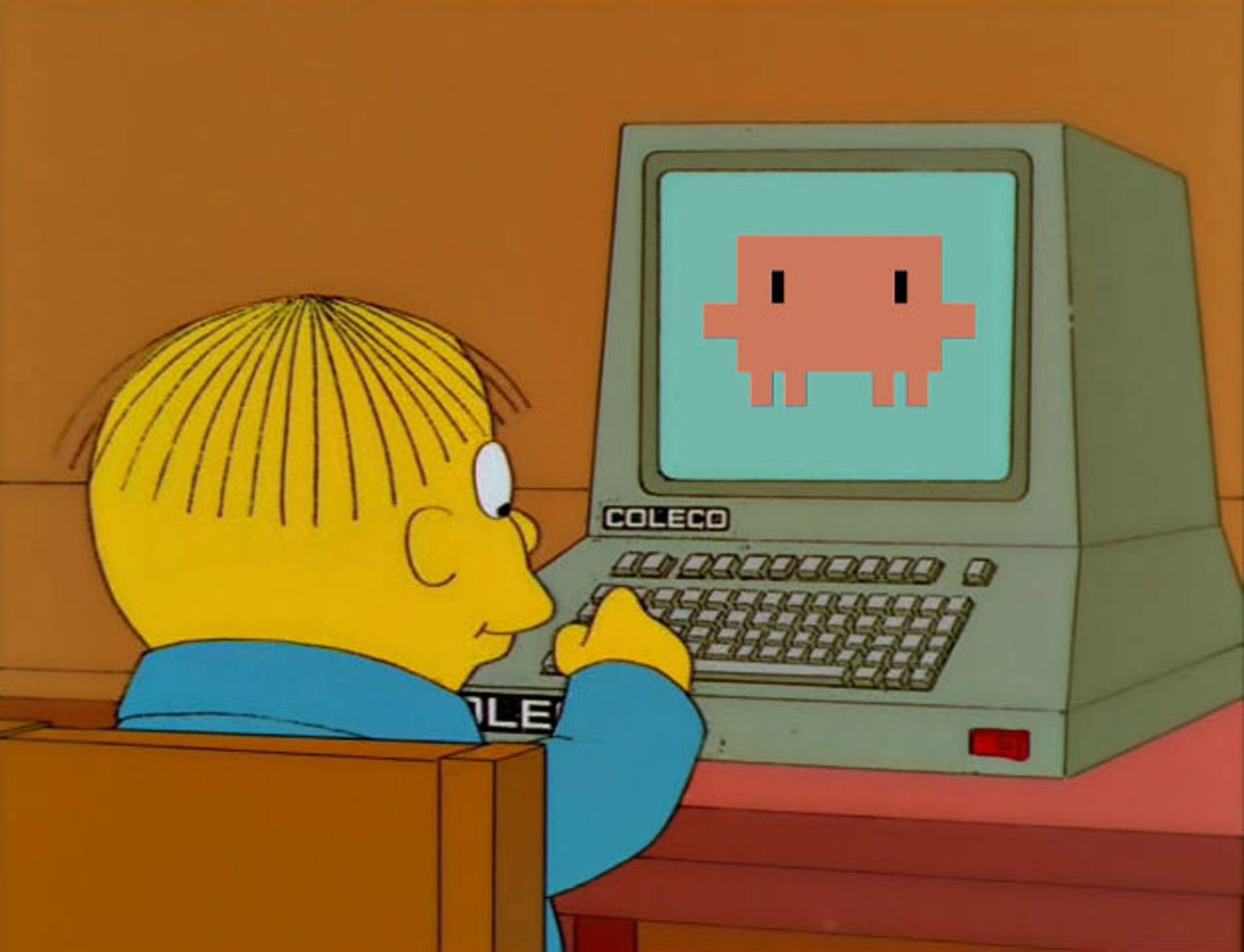

Ralph

A buddy of mine is deep down the AI rabbit hole, but he's also more experienced, a former systems engineer and pretty damn sharp. He sent me a video2 about the web forking over to agents and it got me to thinking. I put together a local filesystem server called ralph-fs3 as an exercise — partly to understand the protocol from the inside and partly because I wanted Claude Code to have read-write access to a couple of local directories without me copy-pasting paths all day. The server is TypeScript, uses the official @modelcontextprotocol/sdk, and exposes seven tools: read_file, write_file, list_directory, create_directory, file_info, delete_file, and search_files. Registered via a .mcp.json file in the project root and enabled through the terminal and desktop apps.

My skepticism had me thinking carefully about path safety and a deeper understanding. The server needs to enforce that no tool call escapes the allowed directory tree, because you're essentially handing the model a shell that can write files. I ended up with a simple allowlist resolved at call time — every path gets canonicalized and checked against the list before anything happens. It's not complicated, but it's the kind of thing you have to think through before you just wire up the filesystem and hand it to an agent.

It's named Ralph4 because of a recent reference to an almost completely autonomous style of building software. As I get it up to speed, I'll add in more tools, utilities, and skills. Eventually I'll have a setup that's completely customized to the type of work I do most often that's completely familiar with my servers and projects.

Doing Things

What MCP really represents is a step toward AI that can act rather than just advise. The agentic framing has been floating around for a couple of years now but it's mostly felt speculative. A clean, open protocol for tool use starts to make it concrete. You can write an MCP server for anything — your local files, a database, a REST API, a browser, a calendar — and the model can compose those tools to accomplish real tasks. The composition is the thing. A model that can read a file, reason about it, write a modified version back, and then call a build tool has meaningfully different capabilities than one that can only narrate what it would do if it could.

And it's coming for everything. Everything. Most folks will just be granting Gemini access to their email and calendars or installing various plugins. I'm a local first privacy minded fella, so it'll all be custom for me. Because it's published as an open spec rather than a proprietary feature,Microsoft, Google, and a handful of others have already adopted it5. It has the shape of something that could standardize the way models interact with software environments the same way LSP6 standardized the way editors interact with language tooling.

For now, I'm limiting Ralph to a very small subset of my work. And while the market for it will likely be virtual assistants for most, I'd like to keep mine focused. I manage a lot of websites and a handful of servers. Eventually I'll be connecting my local agent to those machines and projects. Even the WordPress websites will be able to use the protocol7 and working with a lot of software will be powered by autonomous agents. Some of it will be framed within the agent interface8 as embedded extension apps9.